HOW TO CREATE CUSTOM EC2 VPCS IN AWS USING TERRAFORM

One of the fundamentals of cloud administration in AWS is knowing how to create a custom Virtual Private Cloud (VPC) that enables the launch of AWS resources such as EC2 instances into a virtual network. What gets deployed within a VPC varies across use cases, but VPCs are generally used as a foundation for the majority of AWS infrastructure components and services.

Automate for Scalability

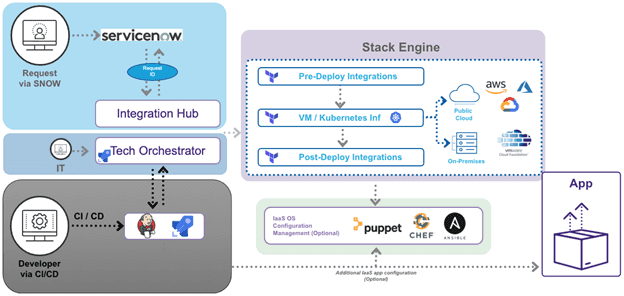

In the current world of cloud computing, gone are the days of hand-building infrastructure components manually. Without automation, provisioning and configuration tasks will not only take days (or weeks…or months…) but are prone to errors and inconsistencies. All of the plumbing that makes up and supports an application stack can, and should be, provisioned and configured using automation tools and infrastructure-as-code (IaC), especially when deploying applications in the public cloud. Automation allows for the ability to scale and to track deployed resources. Automation tools also make it possible to build a self-service catalog that integrates with platforms like ServiceNow. A self-service catalog can be designed within these platforms to orchestrate multiple automation tools, like Terraform and Ansible, for provisioning and configuration management of cloud resources. A self-service catalog automates workflows and approvals to enable organizations to improve the customer experience, accelerate service delivery, and reduce operational costs. For this guide, we will be creating a custom VPC and deploying two EC2 VMs using Terraform. Terraform is an open-source IaC software tool that allows cloud architects to define components and their dependencies using relatively simple declarative configuration files. Terraform allows for provisioning, modification, and decommission of all cloud resources using a simple CLI workflow (write, plan, apply). Terraform has an open-source version which is free to install and use. At a larger, scale subscription versions – Terraform Cloud and Terraform Enterprise – can be used to manage deployments for different projects and teams, and integrate with other platforms. Let’s get to the code! All code for this example can be found on my GitHub repo at: https://github.com/bugbiteme/demo-tform-aws-vpc The code is broken into three different modules:- Networking (define the VPC and all of its components)

- SSH-Key (dynamically create an SSH-key pair for connecting to VMs)

- EC2 (deploy a VM in the public subnet, and deploy another VM in a private subnet)

Module 1 – Networking

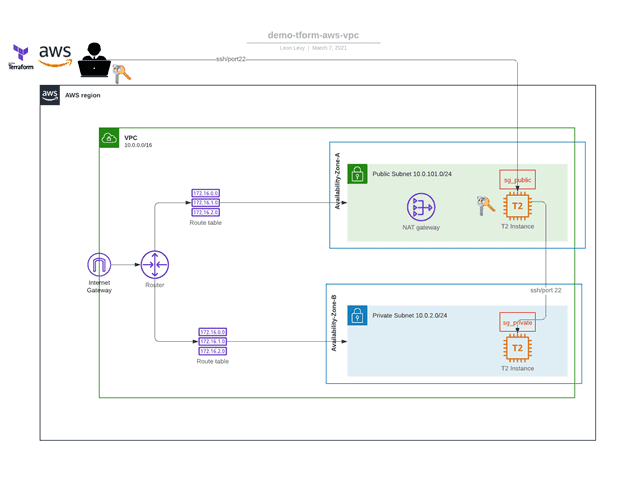

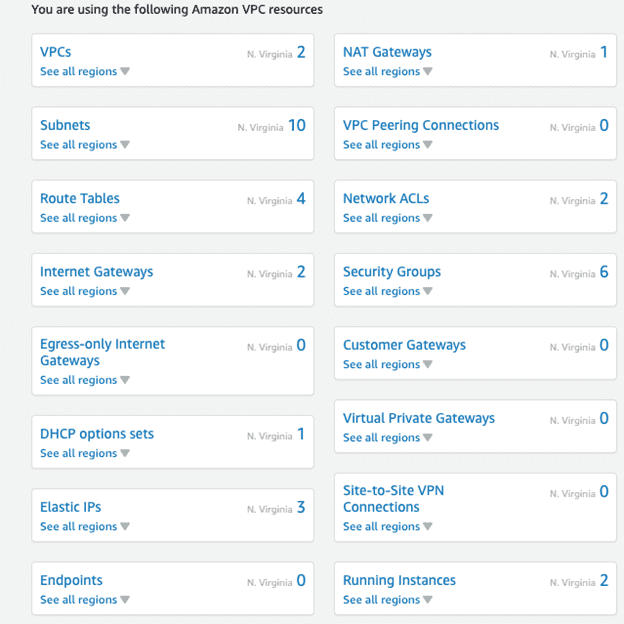

What this code will do:- Create a custom VPC

- Define VPC name

- Create an Internet Gateway and a NAT gateway

- Define CIDR blocks

- Deploy two public subnets, across two different AZs

- Deploy two private subnets, across two different AZs

- Create two security groups (one for public, and one for private access)

Module 2 – SSH–Key

What this code will do:- Dynamically create an SSH Key pair that will be associated with the EC2 instances

- This SSH Key will be created dynamically, and be deleted along with all the other resources provisioned with Terraform.

Module 3 – EC2

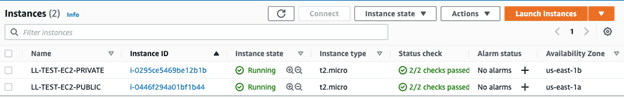

What this code will do:- Create a t2.micro AWS Linux VM in the PUBLIC subnet for use as a bastion/gateway host.

- Terraform will copy the SSH Key from your local system to the VM and apply appropriate file permissions to it.

- This key will be used for connections to instances in the private subnet

- Create a t2.micro AWS Linux VM in the PRIVATE subnet

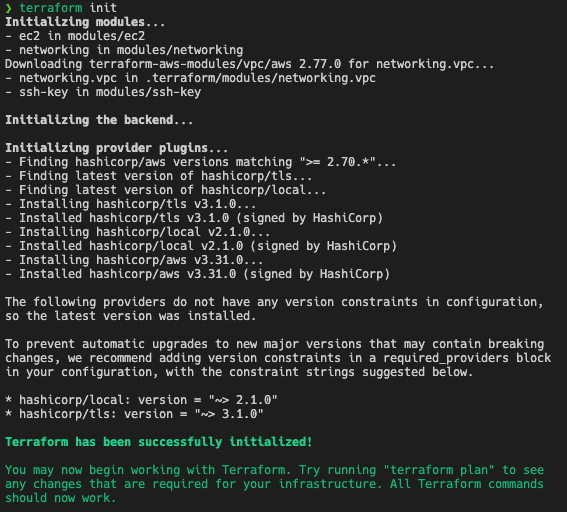

Note: In order to follow this tutorial you will need to have Terraform and AWS CLI installed and configured. To get started, clone this Github repo to your local system and run the following commands: “terraform init”

- This will initialize the working directory that contains a Terraform configuration code with modules and plugins from HashiCorp.

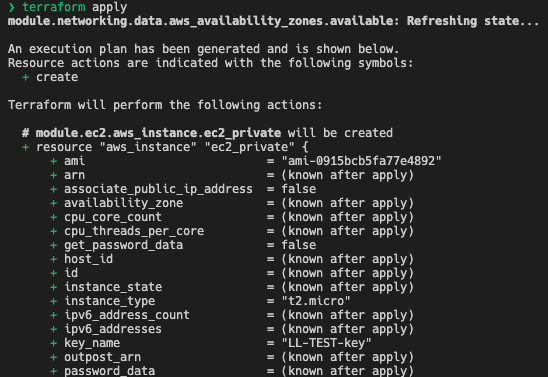

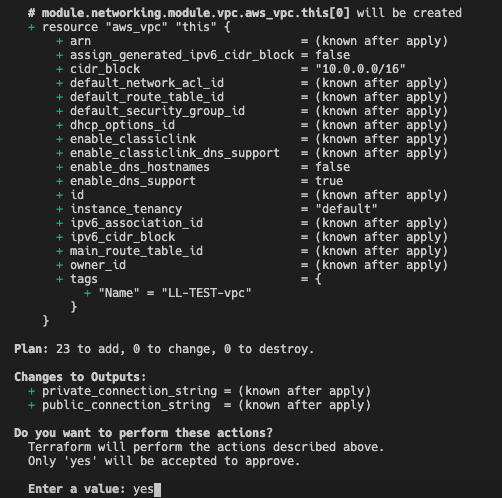

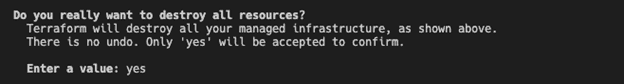

“terraform apply”

- This will first show an execution plan and report the resources to be deployed in AWS (23 resources in this example).

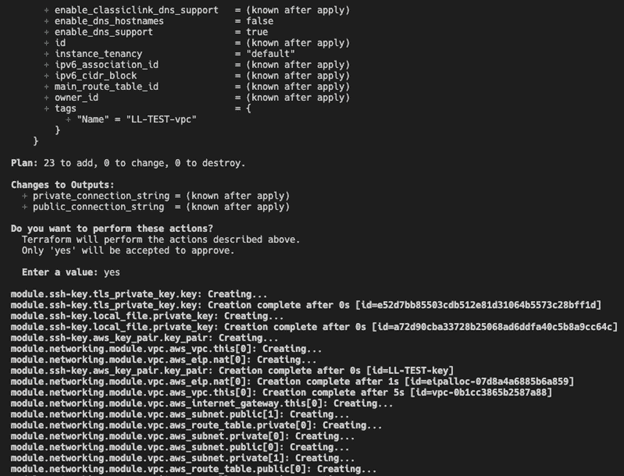

- Once you confirm by typing “yes,” Terraform will begin provisioning the VPC, EC2 instances, and the SSH-key pair in AWS.

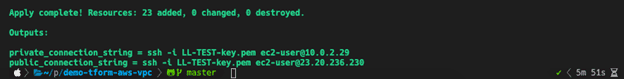

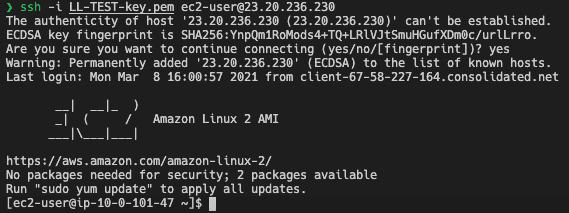

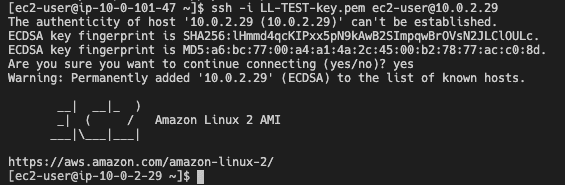

Now you can connect to the public EC2 instance using the public connection string, and once you are logged in to that VM, you can connect to the private EC2 instance with the private connection string.

(connecting to EC2 in public subnet from local host)

(connecting to EC2 in private subnet from bastion host)

To see all the components provisioned with Terraform, log into the AWS web console, and click the VPC and EC2 dashboards (make sure you are in the correct AWS region).

Delete Components of VPC

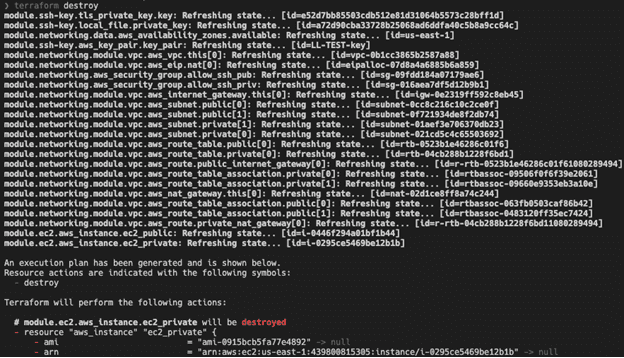

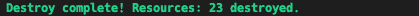

Imagine building all 23 of these AWS resources manually, and then later needing to make modifications to a resource in the VPC and trying to determine the dependencies between these resources to do so. Also, an environment may only need to be available briefly for dev and test, so how do you go about deleting all of these resources when they are no longer needed? How many times have you received an unexpected bill from AWS charging you for resources that you forgot to delete? To avoid that, let’s automate this process to delete these EC2 instances and all the components that make up the newly created VPC with one command. “terraform destroy” .- The “terraform destroy” command is used to destroy the Terraform-managed infrastructure. This will ask for confirmation before destroying.

- Once you confirm by typing “yes,” Terraform will delete all of the 23 AWS resources it created earlier. (Note: This will only destroy resources provisioned from the current project, nothing else.)

Automation at Scale

At scale, Terraform is part of a larger automation workflow and provides additional functionality that isn’t covered here, such as keeping track of the state of deployed resources. Terraform code can be managed and deployed the same way application code is deployed, through DevOps practices and automated CI/CD pipelines using tools like ServiceNow.