You may have heard once or twice in the news that AI is incredibly power hungry. The infrastructure requirements for AI continue to grow, with higher capacities announced seemingly monthly. In this article, we’ll break down key facts your enterprise needs to know as you plan to adopt accelerated computing in your on-premises infrastructure.

First, some good news: most enterprises won’t be implementing one-megawatt racks anytime soon, despite the catchy blog title. To understand, in part, why support for high-density racks is becoming vital, NVIDIA makes a compelling argument in their recent article about its highest-density Blackwell Ultra NVL72 systems and how these latest 130kW+ racks have achieved a 50× reduction in watts per token.

The key takeaway is that enterprises that are interested in accelerating their business via on-prem infrastructure should strongly consider the necessary facility capital improvements as soon as possible, even if the full picture of AI in the business is not yet fully understood. The lead times required for these capital improvements are often measured in years, so waiting until the AI strategy is baked could result in inadequate facility readiness.

Prepare for the Inevitable

For the many enterprises that are currently hosting anywhere from 10kW to 50/60kW, getting to 130kW+ or even up to 1mW per rack may seem downright impossible. While uncomfortable for some, the reality is that 600kW per rack is coming with NVIDIA Vera Rubin Ultra576 (slated for 2027 delivery), and 1mW racks are already on roadmaps.

Navigating the path toward achieving higher rack densities will ultimately require the deployment of Direct Liquid Cooling (DLC) along with a thoughtful methodology for doing so. This is critical, as the next generation of GPU after Blackwell, known by NVIDIA as Rubin GPUs, are likely going to be available exclusively in a DLC form factor and will not have an air-cooled option. Based on the silicon density in future GPU chips from NVIDIA and other vendors, as well as the chips’ thermal design power (TDP), the only way to cool them going forward will be to use direct-to-chip liquid cooling. This could apply to high core count CPUs from Intel, AMD, NVIDIA, ARM and others in the future as well, though that time horizon appears less immediate. There is still value in implementing DLC for CPUs prior to becoming a hard requirement as a way to improve power usage effectiveness (PUE), density, and performance. For GPU systems, DLC should nearly be considered a hard requirement, effective immediately.

A reasonable aim for enterprises in the next two years is to support a subset of their rack infrastructure that is up to ~150kW per rack, with 80+% of that heat being dissipated via DLC. For a five-year strategic planning goal, supporting 500kW per rack with 95-100% of that being direct liquid-cooled for some portion of your rack infrastructure is a realistic target. Ideally, this would be done in such a way that the infrastructure could be easily adjusted to make it additive (e.g., use 2x 500kW rack positions to support a single 1mW rack deployment).

Build the Infrastructure Roadmap

The shift toward higher rack power densities and the growing inevitability of direct liquid cooling requires a deliberate, infrastructure-led strategy. Enterprises should begin with a clear-eyed assessment of their existing data center and colocation footprint to understand whether current power and cooling systems can be upgraded to support next-generation workloads. In many cases, capacity can be reclaimed by consolidating legacy CPU clusters into more efficient architectures or by relocating select on-premise workloads to cloud or SaaS platforms. Those changes can create the headroom needed to re-engineer portions of a facility for high-density deployments.

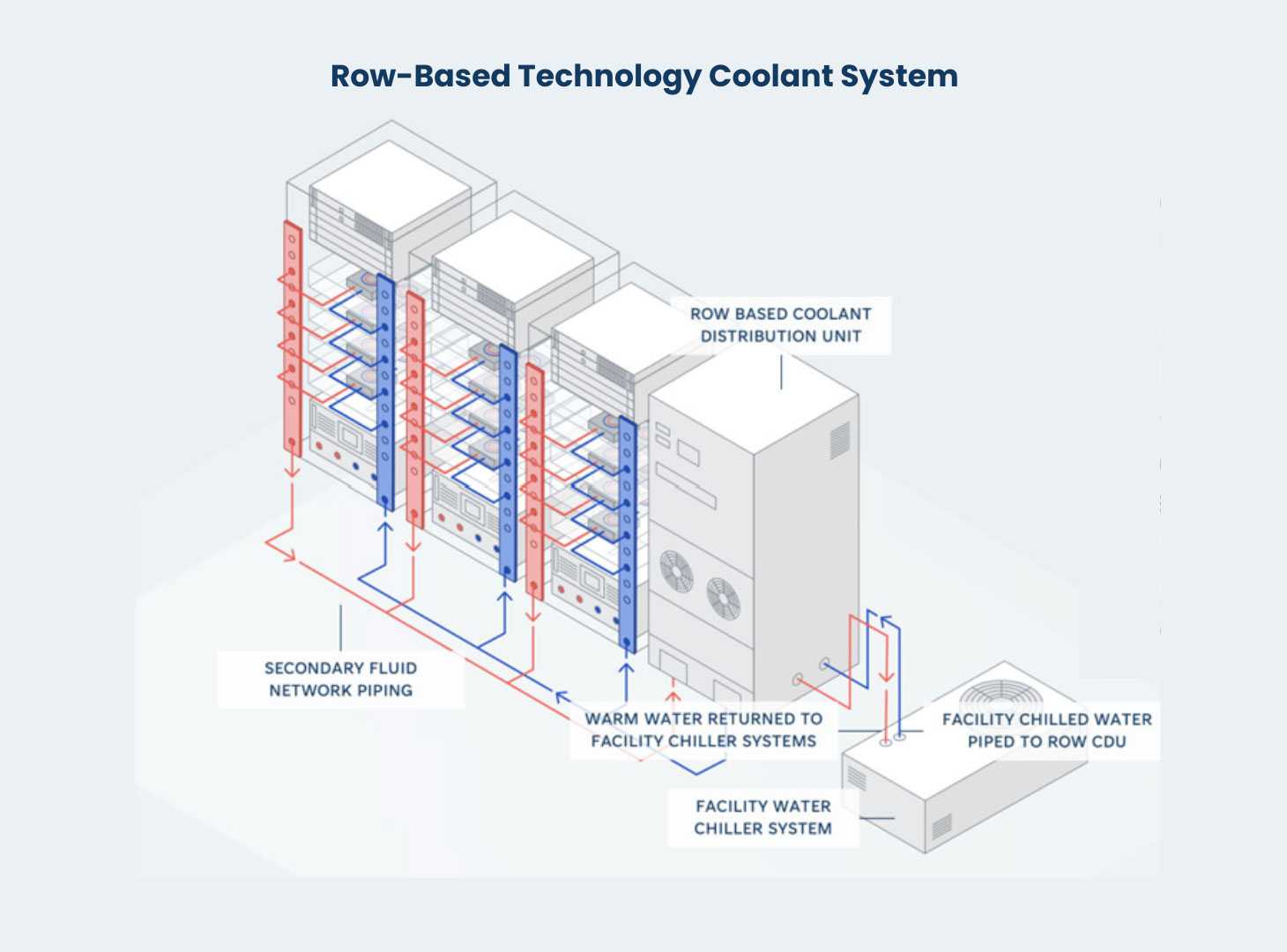

Where feasible, upgraded environments may warrant redesigned power distribution and will require the introduction of a Technology Cooling System (TCS), also known as a Secondary Fluid Network. This TCS loop will be integrated with existing primary facilities water via Coolant Distribution Units, potentially requiring updates to the facility primary water loop. This analysis and execution demands coordination across facilities teams, engineering partners, and equipment vendors to confirm that available water infrastructure can support the CDUs required for liquid-cooled environments.

In some situations, even substantial capital improvements will not bring a legacy facility in line with future power and cooling roadmaps. In those cases, enterprises may look to new colocation environments purpose-built for higher densities. This does not require a wholesale migration of all workloads. Accelerated computing clusters can be deployed in new facilities while remaining tightly connected to existing environments through well-architected networking, storage, and security integration.

From initial assessment and strategy through execution, AHEAD can help with:

- Evaluating existing data center and colocation environments for high-density and liquid cooling readiness

- Defining practical upgrade paths for power distribution and cooling infrastructure

- Modernizing and consolidating legacy CPU environments to reclaim capacity

- Assessing cloud and SaaS migration opportunities through enterprise architecture and FinOps analysis

- Engineering high-density rack environments within existing facilities

- Designing and integrating Technology Cooling Systems

- Validating primary facilities water availability and mechanical compatibility

- Collaborating with facilities teams, co-lo providers, and equipment vendors

- Identifying and securing colocation facilities purpose-built for higher power densities

- Architecting GPU-based accelerated computing clusters with integrated networking and storage

- Ensuring secure, high-performance connectivity between new AI infrastructure and existing enterprise applications

Final Thoughts

A small subset of enterprises may see 1mW racks in the near term, but far more organizations will need to support 100-500kW racks within the next two to five years. The constraint that enterprises must contend with is not vision, but infrastructure lead time. Power and cooling upgrades often take anywhere from 18 to 36 months, which means facility planning must move ahead of finalized AI use cases.

Enterprises don’t need to transform every rack overnight, but they do need a defined path to introduce higher-density capacity in a controlled and additive way. That requires early assessment, realistic road mapping, and coordination across IT and facilities teams.

AHEAD helps organizations align infrastructure modernization with accelerated computing plans so that when demand increases, the environment is ready to support it.

Get in touch with us today to learn more.

;

; ;

;